- Solutions

ENTERPRISE SOLUTIONS

Infuse new product development with real-time intelligenceEnable the continuous optimization of direct materials sourcingOptimize quote responses to increase margins.DIGITAL CUSTOMER ENGAGEMENT

Drive your procurement strategy with predictive commodity forecasts.Gain visibility into design and sourcing activity on a global scale.Reach a worldwide network of electronics industry professionals.SOLUTIONS FOR

Smarter decisions start with a better BOMRethink your approach to strategic sourcingExecute powerful strategies faster than ever - Industries

Compare your last six months of component costs to market and contracted pricing.

- Platform

- Why Supplyframe

- Resources

Data center revenue for Nvidia in Q2 exploded by 141% sequentially and 171% year-over-year. Despite the ever-increasing roster of competitors playing in the data-center AI acceleration chip market, Nvidia remains the dominant force in the industry.

The company’s GPUs and CUDA API have long been the default choices for data-center AI software developers, but new developments are causing a spike in demand.

The ChaptGPT Factor

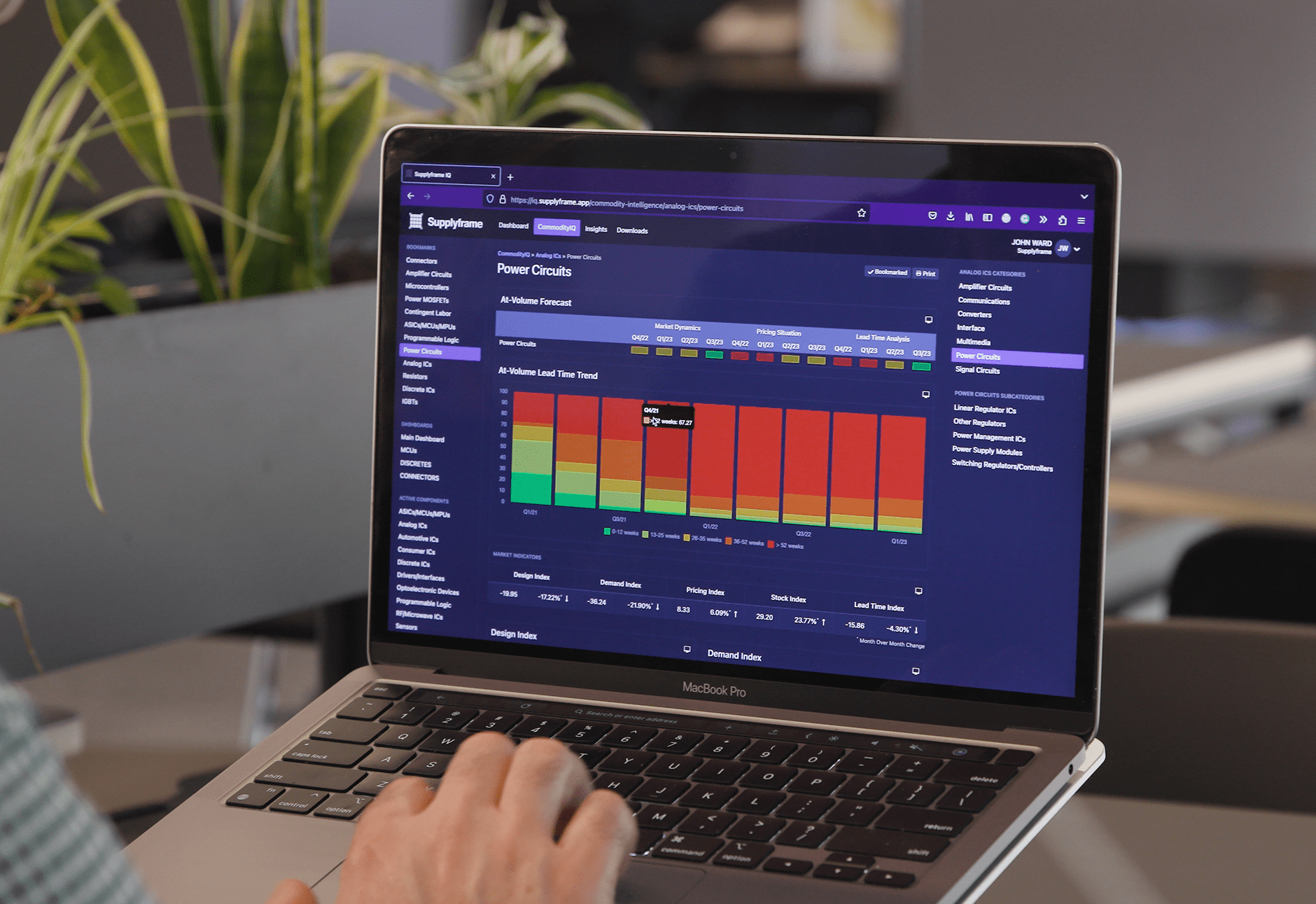

The generative AI boom triggered by the success of ChatGPT has sent Nvidia’s growth into hyperdrive, with the company’s chips providing the processing horsepower that enables the language model. The company has enjoyed a 65% to 70% share of the data center AI market in recent years, according to an estimate by Commodity IQ.

Building on its position, Nvidia in its fiscal Q2 earnings release reported massive uptake of the H100, the company’s flagship data center AI GPU, and the successor to the industry-standard A100. The company also began shipping its GH200 Grace Hopper Superchip, which combines the company’s Grace Arm-based CPU with a GPU using the same Hopper architecture employed in the H100. This device will open the door for Nvidia to compete directly with Intel and AMD in the data center CPU market, expanding Nvidia’s available market and providing an alternative to the dominant X86 architecture.

How These Developments Affected the AI GPU Market

Nvidia’s dominant position is increasing pressure on buyers for firms producing data center AI equipment due to high pricing and lack of availability. The average price of an H100-equipped PCIe card reportedly is as high as $30,000.

The AI GPU market is currently in a critical shortage, with H100s increasingly challenging to source. With Nvidia projecting overall company revenue growth of 18% sequentially and 139% year-on-year for Q3, this shortfall will grow more acute this year.

As a result of these factors, server vendors should seek alternative sources to Nvidia. While CUDA’s extensive mindshare among software developers represents a significant competitive barrier to alternative suppliers like AMD and Intel, these companies have put all the pieces in place for customers to make a smooth transition to their chips and development tools.

With demand for AI acceleration chips set to continue to rise, now is the time for companies to begin evaluating and adopting Nvidia alternatives.